We had one week to run discovery, two people to do it, and eight weeks to deliver — so instead of debating CMS options in theory, we used AI to rapidly prototype multiple paths and let the client make an evidence-based choice.

One-week discovery, real stakes

We were brought into a project to migrate parts of an existing web application into a Content Management System (CMS) so the marketing team could ship content without constant developer involvement.

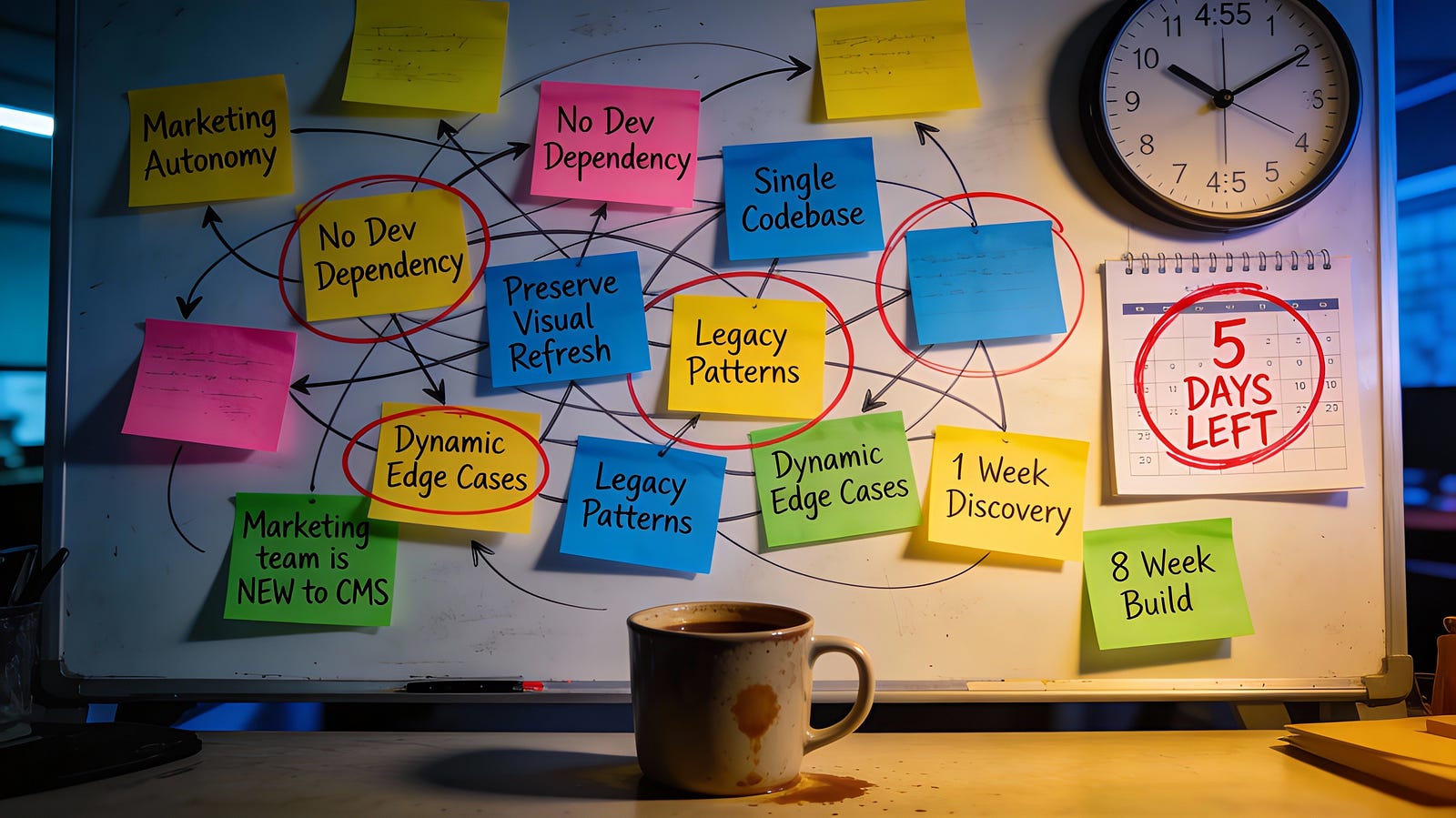

The constraint that shaped everything: we had less than a week to complete discovery (including presenting working prototypes), and then eight weeks for actual development — while maintaining two independently deployed websites inside a single codebase.

There was also a genuine dilemma from day one: we initially leaned toward a headless approach because it better matched the architecture and long-term workflow, but the client strongly preferred a full WordPress solution — mainly because WordPress is popular, widely supported, and feels like the safe default.

Constraints

At first glance, the scope sounded clean: migrate “static” pages into a CMS. In reality, some “static-looking” pages contained dynamic behaviour, which meant we needed strict boundaries: CMS owns content and structure; the application still owns logic and interactivity.

Here’s the situation we were optimising for:

Requirements: Marketing can edit pages and publish blogs without engineering.

Non‑negotiables: Preserve the recent visual refresh; keep UI consistent.

Reality check: Mixed legacy patterns/templates in the codebase; “static” pages with hidden dynamic edge cases.

Human constraint: Marketing team had little/no CMS experience, so workflow simplicity mattered as much as raw flexibility.

Exit strategy: Because CMS was new to the project and stakeholders, we needed a realistic ability to roll back or switch direction later without turning it into a rewrite.

That’s a lot to be confident about in under a week.

What the client used to evaluate options

To avoid a tool debate, we aligned early on the editor workflows the client actually cared about.

Enabling non-technical teams to update content.

Rearranging and managing components on a page.

Adding/removing sections and controlling ordering.

Independently creating new pages and blog content.

What we did differently: parallel prototyping

The real problem wasn’t “pick a CMS.” It was: reduce uncertainty fast enough to make a decision we could stand behind .

So we split the work in parallel as a two-person team:

One of us took WordPress: research, local setup, and a small prototype that mirrored the kind of pages we actually needed to migrate.

The other took Strapi: research, local setup, and a comparable prototype aimed at the same content and workflow goals.

We compared findings daily and used our knowledge of the existing codebase to sanity-check every conclusion against real integration constraints.

AI helped most when it pulled us out of “documentation land” and into “working baseline” territory — fast. We used it to:

Accelerate setup and configuration when we got blocked — so we didn’t burn half of discovery on environment friction.

Turn vague requirements into concrete evaluation questions — “What would content modelling look like for these page types?” or “What would editors actually do day-to-day?”.

Surface common integration pitfalls early enough to test them in the prototype, not discover them mid-build.

The key is that AI didn’t make the decision. It reduced the cost of testing assumptions.

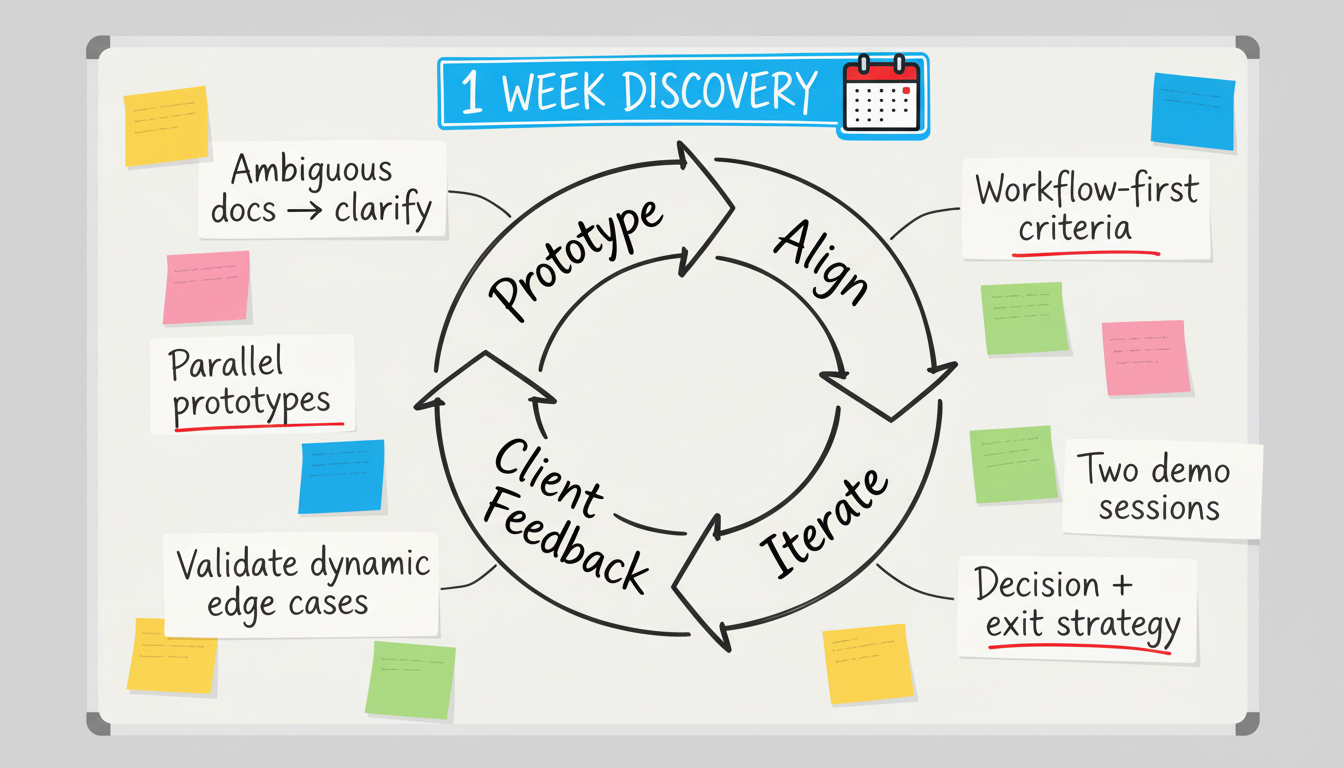

The discovery loop that changed the decision

By the end of discovery, we weren’t presenting an opinion — we were presenting options the client could interact with.

Discovery wasn’t linear — we iterated: prototype → align → client feedback → iterate.

We showed three paths, not one:

Full WordPress.

Headless WordPress.

Strapi (headless).

This shifted the conversation from “what’s popular” to “what workflow will we actually run for years.” The client could see what editors could do, what felt confusing, and what would create ongoing engineering overhead.

We also made the ecosystem tradeoff explicit: staying in the same JavaScript/TypeScript world vs introducing a separate CMS-side ecosystem (PHP + WordPress conventions/plugins) and owning that long-term.

The most important outcome: we didn’t have to “convince” the client that headless + Strapi was better. We gave them enough concrete evidence — pros/cons plus working prototypes — that they could decide themselves. That decision also surfaced questions that often appear later as scope creep, but here they came up early while change was still cheap.

What AI changed (and what it didn’t)

AI accelerated discovery, but it never owned decision-making.

AI is an amplifier, not an authority.

Where AI helped:

Getting to working prototypes quickly, which let us evaluate real workflows instead of debating features.

Narrowing the space faster, so our proofs-of-concept were targeted at the highest-risk unknowns.

Making it realistic to explore multiple options deeply enough to be honest about tradeoffs under a hard timeline.

Where human judgement took over:

Understanding our codebase’s historical quirks and the real cost of “static-looking” pages with behaviour.

Balancing delivery risk against long-term ownership for both engineering and marketing.

Managing client dynamics: respecting the WordPress preference, while still creating a process where the client could evaluate alternatives without feeling pushed.

Protecting an exit strategy: ensuring the approach didn’t lock us into a corner if adoption or constraints changed.

Reflection

The biggest lesson wasn’t that AI produced better answers — it made it feasible to produce better evidence inside an unrealistic timeline.

By using AI to compress time-to-prototype, we gave the client what discovery often fails to deliver under pressure: a chance to evaluate real workflows (page composition, section ordering, new page/blog creation) before committing. That’s why the client could choose Strapi confidently — because they experienced the tradeoffs, instead of being sold a conclusion.

That’s the approach I’d reuse: define workflow-first criteria (including an exit strategy), parallelise prototypes, and treat AI as leverage — not judgement.

Use AI to accelerate building — not deciding — so the final decision is owned by humans and backed by something real.